The most vocal critics of unregulated AI research usually don’t mince words. Elon Musk, probably the most famous individual sounding the alarm, has described AI using a variety of colorful language, including “summoning the demon,” “more dangerous than nukes” and “capable of vastly more than almost anyone knows.”

Musk isn’t alone in his worry about the “insane” — his words — lack of regulatory oversight for AI development. His also isn’t the only opinion worth considering. Brilliant scientists, philosophers and engineers are all on the record saying they believe the doomsaying is melodramatic.

The truth probably lies somewhere in the middle. However, the safety and security of the human race demand that we take unlikely concerns about AI deadly seriously. Here’s a look at several of the top ones.

Automation and Unemployment

The most immediate, realistic concern about artificial intelligence is the likelihood of its displacing human workers. McKinsey estimated in 2016 that 78% of predictable jobs and 25% of unpredictable ones are at a high risk of automation.

Technology has steadily and consistently improved the amount of work possible per hour of human labor. This has cost us entire jobs categories along the way but also created new ones.

Suppose robots and artificial intelligence see 800 million jobs eliminated by 2030, as some research suggests. We may be entering a period of economic uncertainty that’s so far unprecedented.

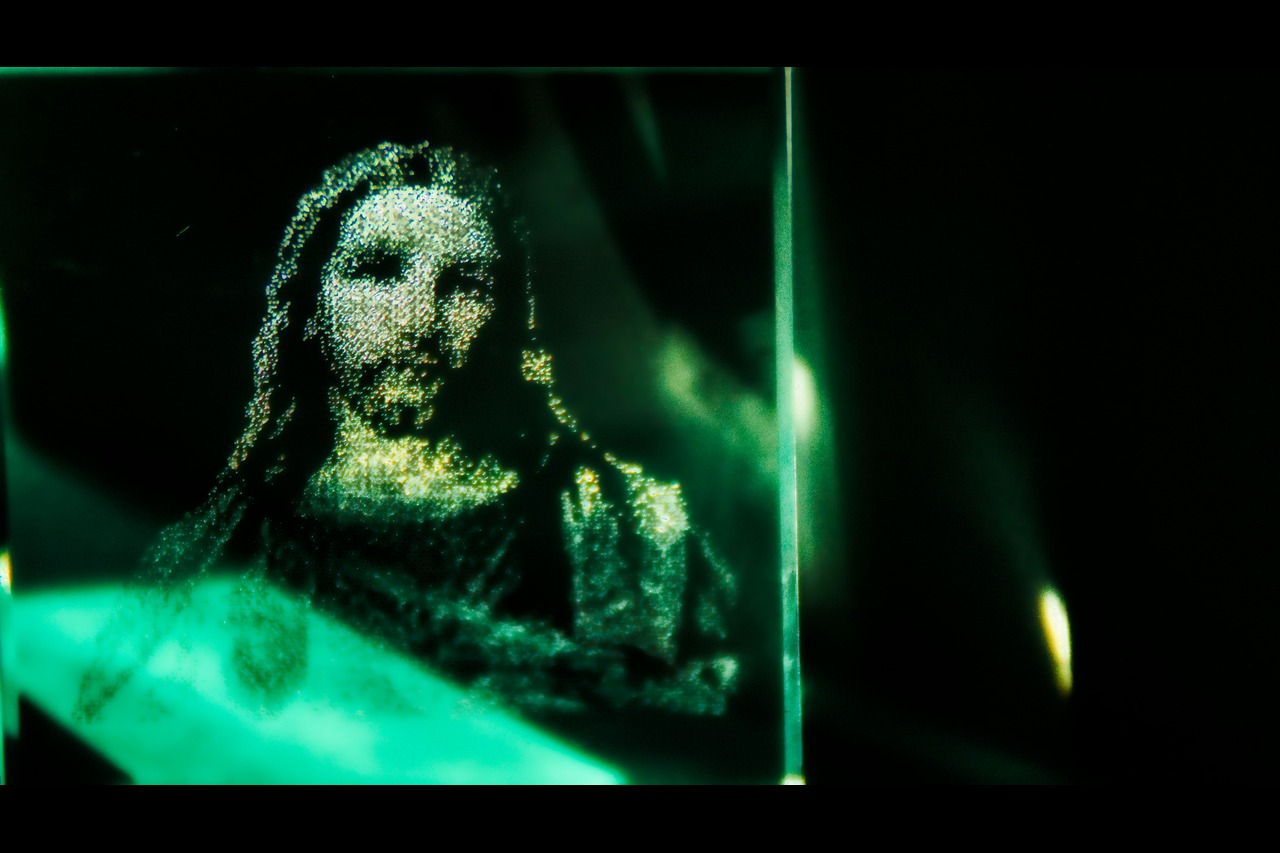

Filter Bubbles, Social Engineering and Deepfakes

One of the greatest concerns about artificial intelligence is already coming to fruition right before our eyes. Today’s social media websites and news recommendation algorithms serve content to users that they’re likely to click on rather than necessarily truthful information.

This is a convenient feature, but it results in filter bubbles. It occurs when people only seeing content they’re already likely to believe and agree with. Scientists and engineers are working hard to make sure AI-powered recommendation engines don’t damage the democratic process.

There is another AI-related worry when it comes to corporate or state entities spreading misinformation deliberately.

So-called “deepfakes” use machine learning and artificial intelligence to created extremely convincing fake videos featuring real public figures. In what was either an honest blunder or an attempt to “test the boundaries,” even the president of the United States has been discovered spreading deepfake videos online.

Deepfakes are an especially insidious form of propaganda. Individuals can create them quickly with AI and because, for average internet users at least, they’re often extraordinarily convincing.

Absolute Global Surveillance

A 100-page paper called “The Malicious Use of Artificial Intelligence: Forecasting, Prevention, and Mitigation,” released in 2018 by 14 public and private groups, suggests that AI could cause “minor chaos” to “serious harm” within five years.

According to the paper, there are several worrying possibilities where “malicious uses of AI” are concerned:

- Machine learning is the process of training an AI using large amounts of data. Privacy experts worry about the huge net that AI companies may cast in the search for “training data.”

- After it’s online, an AI could be used to hack into high-value databases or the online accounts of individuals or leaders swiftly.

- Self-driving cars, drones, public infrastructure, schools, business offices and medical machines all constitute connected environments or devices. A rogue or malicious AI could compromise these devices or turn toward social engineering purposes.

Elon Musk and Russian President Vladimir Putin agree — any nation that masters AI could become “the ruler of the world.”

Racist and Biased Artificial Intelligence

Human beings are flawed creatures. Any attempt at creating a new form of life runs the risk of passing on human blind spots, such as racism.

Engineers wanted to know what would happen if they tasked an AI with predicting the likelihood of a former criminal committing another crime in the future. In this example, the AI underestimated the likelihood of white criminals re-offending. Meanwhile, it overestimated the likelihood of people of color doing the same.

In another experiment to test an AI’s tendency toward bias, the programmers’ AI creation showed favoritism toward white banking customers while underestimating the creditworthiness of minorities.

Can Humanity and Intelligent Machines Co-Exist?

Finally, to look at things from another perspective, it’s worth considering what the ethical treatment of AI themselves would actually look like. Today, there is an ongoing global campaign to recognize members of the Great Apes family as worthy of human rights. Humanity must prepare to have a similar conversation about the rights of artificially intelligent beings.

There’s a lot we don’t know about AI — including, incredibly, even how it works. Even if Elon Musk is overstating the most severe concerns about AI, there’s plenty of reason for caution.

Now is the right time to encourage an ethical, 100% transparent and cautiously deliberate approach to developing AI. Nations have been slow to codify these worries into regulatory oversight for AI companies, which means the window to act might be closing fast.

Recent Stories

Follow Us On

Get the latest tech stories and news in seconds!

Sign up for our newsletter below to receive updates about technology trends